What I Think Intelligence Is

Not Yet, Not Like This

Even after my exchange semester, I wasn't ready to go back to Korea. So I got an internship at an immigration law firm in the U.S.

Washington D.C., 2017. The kid who couldn't order at Subway, deciding not to go home yet.

Most of my work was marriage-based green cards: helping couples prove to the government that their marriage was real. I did what any diligent intern would do. I looked up the rules. Read everything USCIS published (they're the agency that decides who gets to stay in America). They told me what to submit. But they never told me what actually mattered.

One evening I pulled two case files off the shelf and spread them side by side on my desk. Same types of documents: joint bank statements, wedding photos, affidavits from friends. One couple got approved. The other denied. I went back and forth between them, looking for the difference. Couldn't find it.

So I tried harder. Textbooks. Old case decisions. Checklists I color-coded by hand. Nothing helped. Whatever separated approval from denial wasn't in any rule I could find.

Austin, 2018. The best advice I ever got.

One day, the attorney looked at me and said something that stuck with me for years.

"I don't get why Koreans obsess over rules and guides. You learn by doing the work. You see enough cases, you start to pick up what matters."

That was hard to accept. I grew up in Korea, where foundations come first. You master the basics, learn the concepts, memorize the rules. Application comes last, almost as an afterthought, because if your foundation is solid, the rest takes care of itself. Every instinct I had pushed me back to the guidelines. But here was this American attorney telling me to just practice law. Not study it. Practice it.

But nothing else was working. So I started pulling approved files. Hundreds of them. Just reading. I don't know when it started. But at some point, I wasn't just reading the files anymore. I was sensing weight.

A couple with barely any wedding photos but years of joint tax returns and a shared bank account? Strong. A couple with hundreds of photos but separate finances? Weaker than it looked.

After a while, I didn't even need to finish reading a file. I'd open one, flip through the first few pages, and something in me already knew. Nobody taught me this. I never read it in any guideline. It was just there, running on its own, and I had no idea where it came from. Eventually the attorney stopped checking my work.

And then it hit me. This wasn't just law.

My English had done the same thing. Months of real conversation, no grammar drills, and one day I just stopped translating in my head. Sentences came out before I could think about the rules behind them. I never decided to stop translating. It just happened.

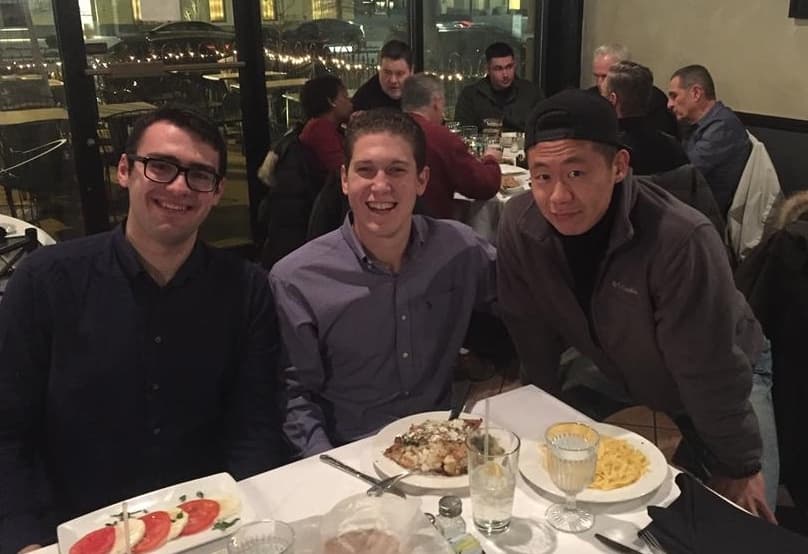

Where I actually learned English: talking to these guys.

The gym too. Never took a class. Never hired a trainer. The first time I tried bench press, I watched the guy before me, copied his grip width, and started pressing. Felt fine. Added more weight. And more. Until the bar came down and didn't go back up. I was trapped under it, arms giving out, groaning loud enough for the whole gym to hear. A stranger ran over and pulled it off me. I sat up, muttered thanks without looking at him, and moved to the other side of the gym.

But I kept showing up. Watched. Tried. Adjusted. Within a couple of years I hit the 1000-pound club, a total that most lifters chase for years. Ask me to explain proper form and I'll stare at you. But put a barbell in front of me and my body knows exactly what to do.

Law. Language. Lifting. I hadn't noticed it before, but the same invisible thing had been running underneath all of them. Learning without being taught. Knowing without being able to explain. I knew something was there. I just didn't have the words for it yet.

Oh. So That's What It Is.

This was around 2018. Years before ChatGPT would make any of this obvious.

I was supposed to be finishing my political science degree. Instead, I'd been skipping my own courses and sitting in on anything about science and technology. I couldn't stop thinking about those files — how I'd learned to sense which cases were strong, without anyone teaching me. That's what led me to Ray Kurzweil's How to Create a Mind.

Ray Kurzweil. Takes 100 pills a day to live long enough to see the Singularity. Has been obsessed with AI since the 1960s. Genius and total geek.

His idea was simple, and it hit me like a truck:

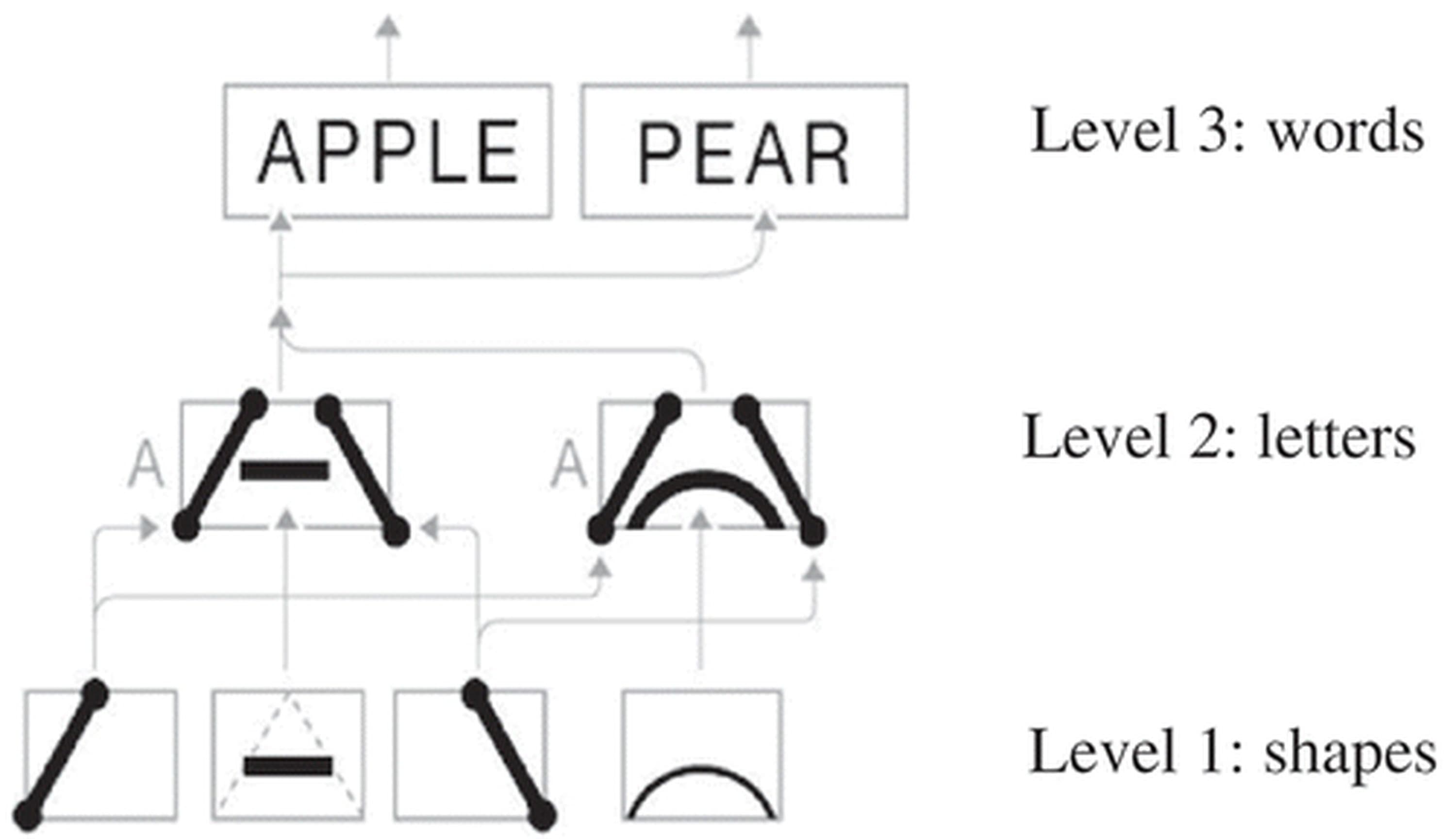

Think of your brain as a building with 300 million tiny workers, stacked on floors.

A worker on the ground floor stares at raw input: light, sound, pressure. She says: "I see an edge." That's it. That's her whole job. She passes the result up one floor.

The worker above takes that edge, combines it with others: "Those edges make a curve." Passes it up. Next floor: "That curve is part of a letter." Next: "Those letters spell a word." Next: "That word, in this sentence, is sarcasm."

How the brain reads: shapes become letters, letters become words. Each level only sees patterns from the level below.

I put the book down.

Each worker does one tiny thing: spot a pattern, pass it up. But stack 300 million of them, and you get a brain that reads a novel and cries. Or glances at an immigration file and just knows.

For the first time, I had a name for what I'd been feeling. Intelligence isn't a mystical spark. It's pattern recognition stacked on pattern recognition.

And suddenly the attorney's words hit different.

You see enough cases, you start to pick up what matters.

He wasn't just giving career advice. He was describing how brains actually work.

But this was still one book and my own gut feeling. For all I knew, I was seeing what I wanted to see. I held onto the idea anyway. For years.

Geoffrey Hinton delivering his Nobel Prize lecture, December 2024. He called us analogy machines, not reasoning machines.

In 2024, Geoffrey Hinton won the Nobel Prize for fifty years of trying to make machines learn the way brains do. I won't pretend I followed all the science. But his conclusion sounded like something I'd been quietly thinking since that law firm in Austin.

We're not reasoning machines, he said. We're analogy machines. We don't think by logic. We think by resonance: the present moment vibrating against everything we've ever experienced, and our brains going with whatever rings familiar.

Fifty years of research. A Nobel Prize. And the conclusion matched something I'd picked up sorting green card applications. It was nice to know I wasn't crazy.

But theory was one thing. Was anyone actually building this?

Andrej Karpathy, founding member of OpenAI, former head of AI at Tesla, had been watching machines make the same shift I did. He put up one slide, and I saw my entire life in it.

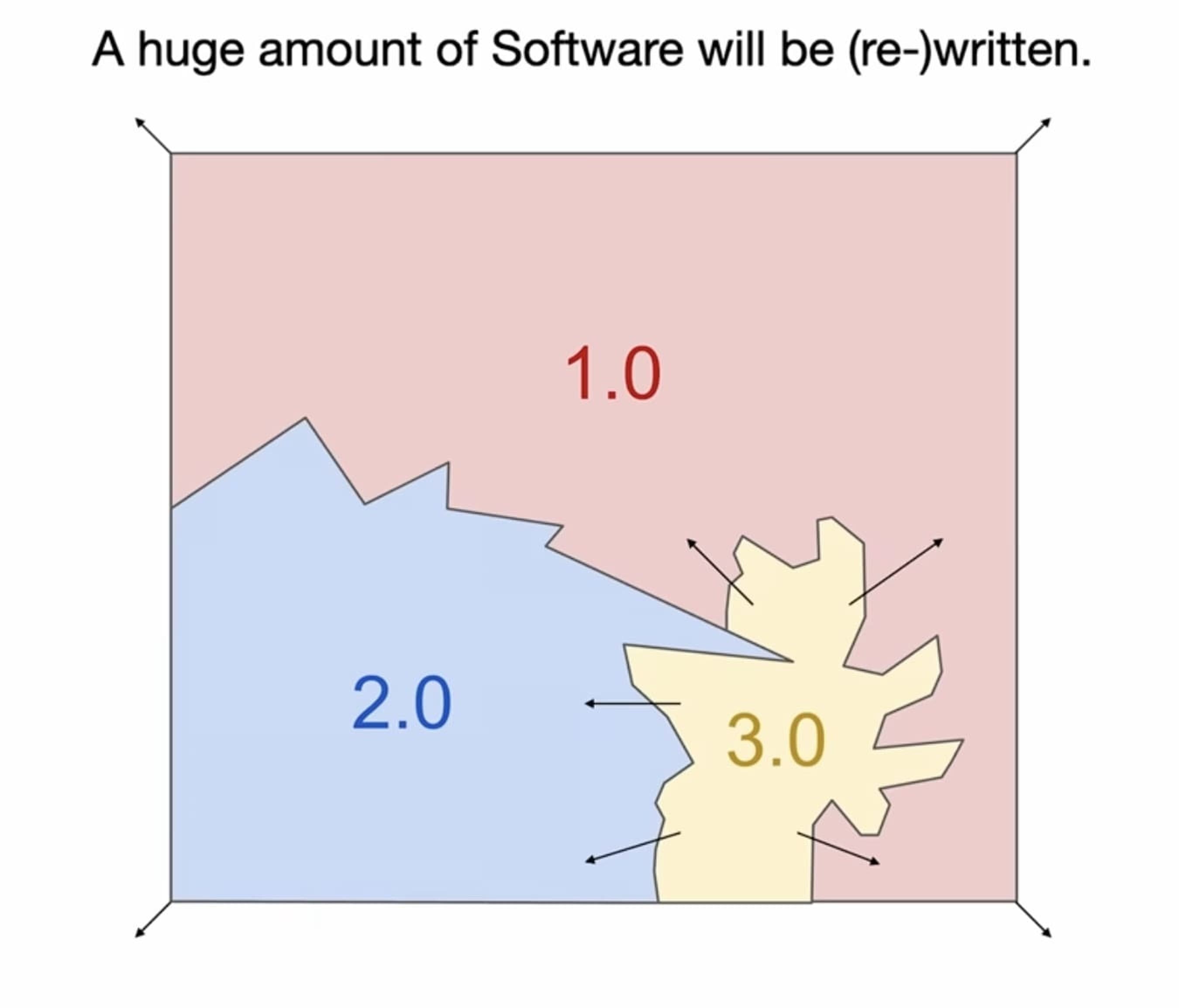

From Karpathy's YC AI Startup School talk, 2025. Software 1.0 (rules), 2.0 (learned patterns), 3.0 (autonomous reasoning).

He described three eras of software. In 1.0, you tell the machine how: every step, every rule, written by hand. In 2.0, you show the machine what: feed it millions of examples and let it find the patterns itself. In 3.0, you tell it what you want: it figures out the rest.

At Tesla, he led the shift in real time. Driving was supposed to require human judgment. But neural networks learned it better than anything engineers could write by hand. They started deleting their own code. Rules lost. Patterns won.

I looked at my own life through this lens.

Korea taught me 1.0: follow the textbook, memorize the rules, apply them. I froze at Subway.

The attorney taught me 2.0: forget the rules, just do the work, the patterns will come. I learned to sense the weight of an immigration file.

And now we're in 3.0: machines that learn patterns and decide what to do with them.

I dropped out of law school and bet everything on it.

Please, Not Math

Once I had this lens, I couldn't stop testing it. Pattern recognition explained language. Social cues. Lifting. Obviously. It fit everything I threw at it.

But there was one thing I kept avoiding. Math.

Math doesn't care what you feel. It doesn't care who you are. You start from axioms, follow the rules, and the answer is the answer. Cold. Airtight. No room for intuition.

I was stuck. If this couldn't survive math, the whole thing fell apart. But if it could, then nothing we do is special. Intelligence isn't a sacred spark. It's just a process. And anything that's just a process can be built by a machine.

Either way, I was going to lose something.

So I went looking. And I found Euclid.

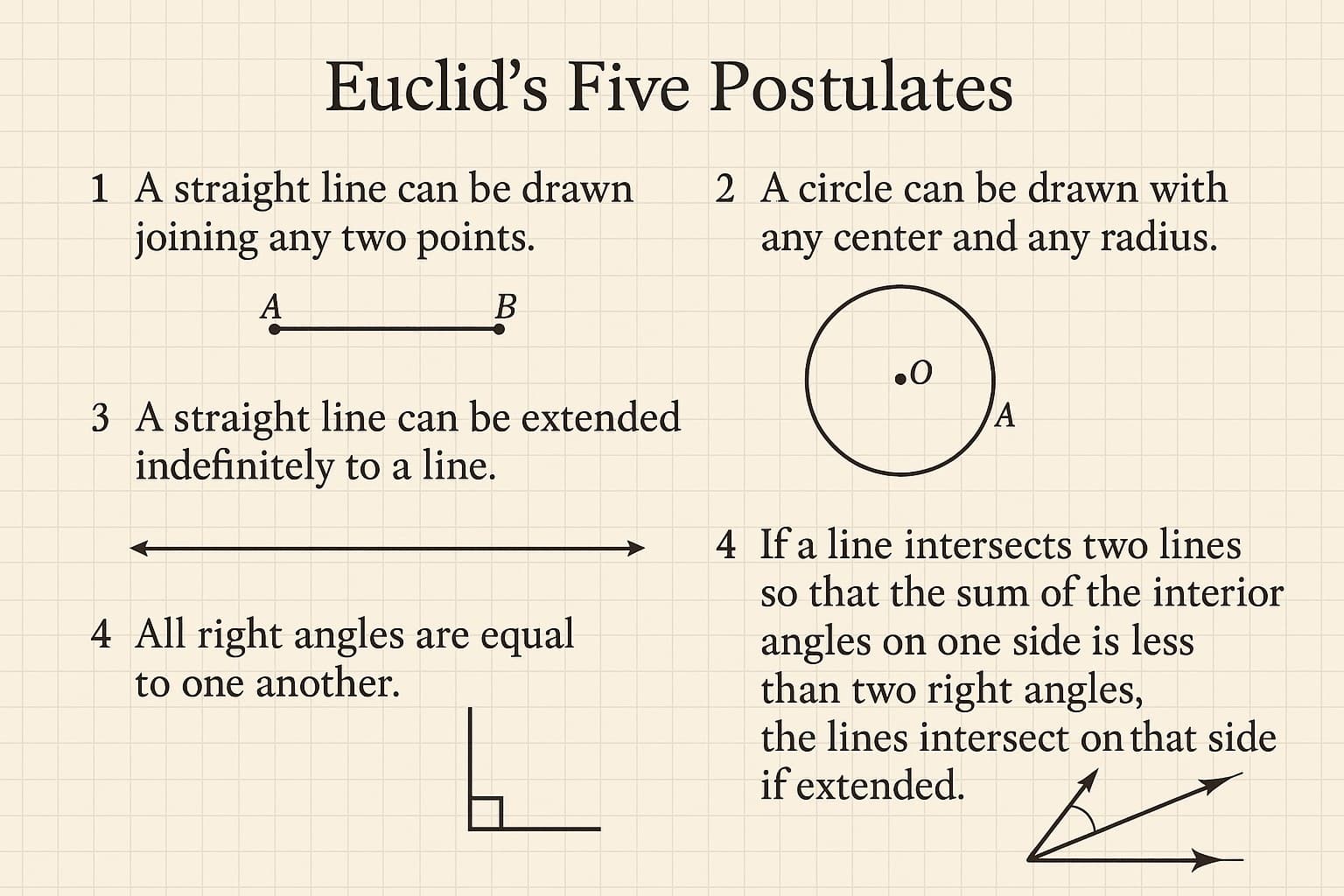

Euclid's Five Postulates. 2,300 years old. Still unproven. The entire history of geometry stands on these gut feelings?

Euclid didn't start with a proof. He started with a pencil. He drew a line between two points. Did it again. Different points, different distances. The line always connected them.

He couldn't prove why. He just noticed it kept being true. So he called it "self-evident" and used it as his starting point.

That starting point became the foundation of all geometry.

The most rigorous, logical structure humans have ever built. Resting on a pattern so consistent that no one thought to question it.

I'd been looking for the exception. I found the opposite.

Proving a theorem? That's following rules. But finding the theorem in the first place? That's noticing a shape in the noise, and guessing it might always hold. The guess comes first. The proof comes after.

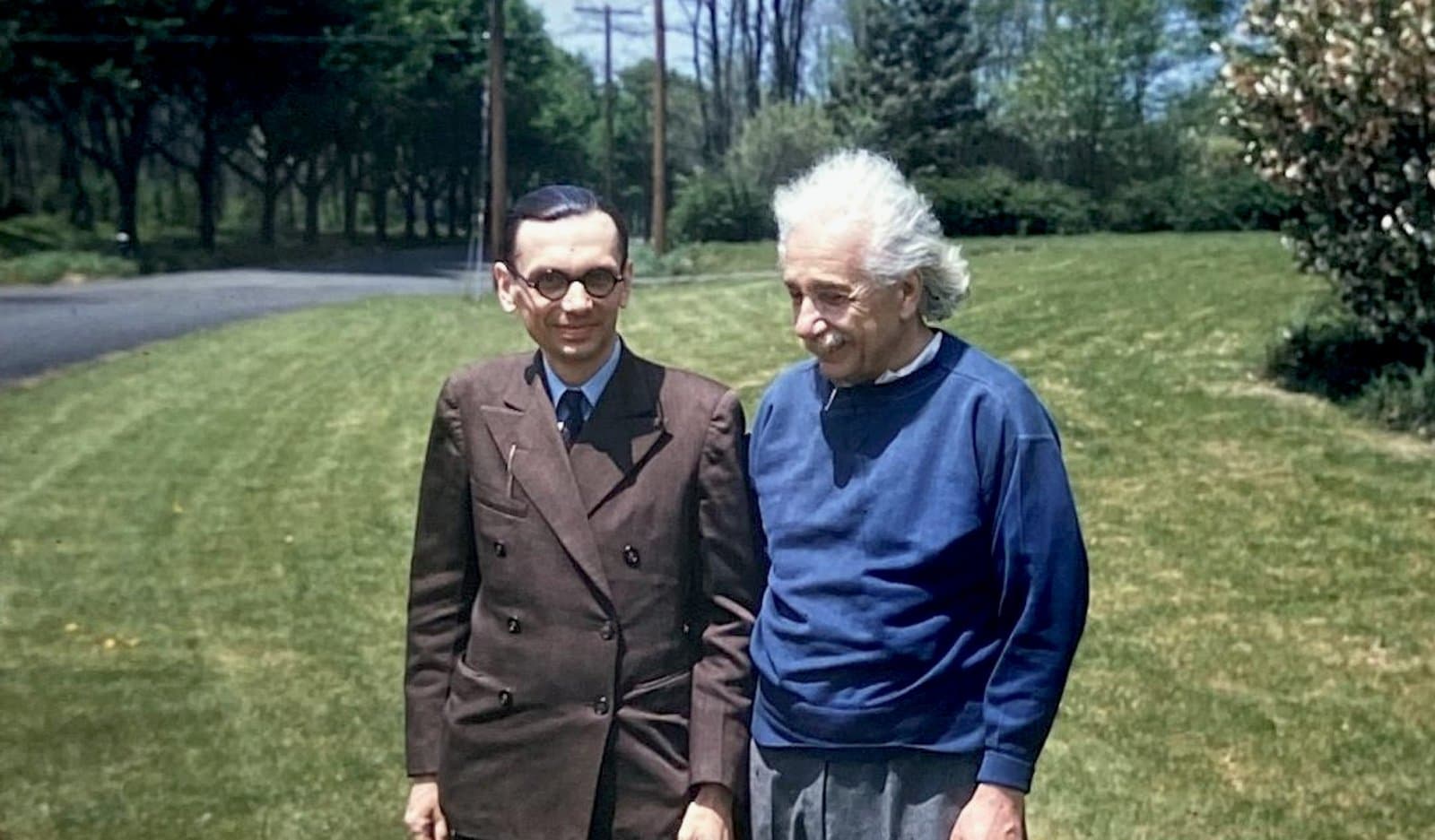

I don't fully get what these two were talking about on their walks, but apparently math is broken and spacetime is weird. Kurt Gödel (left) and Albert Einstein at Princeton.

Gödel asked a harder question. Euclid's starting points were assumptions, fine. But what about everything built on top of them? Mathematicians had spent centuries trying to prove the whole system was complete: start from the right axioms, apply logic, and every truth is reachable. No gaps. No guesswork.

Gödel proved they'd never finish. No matter how perfect your rules, there will always be true statements sitting just outside their reach. You can see they're true. But you can't prove them. Not with the rules. Not ever.

Even math, the most airtight system humans ever built or found, eventually hits a wall where someone has to look at something and just know.

That was enough for me.

The gut feeling I'd spent years building was pattern recognition. A process. And any process can be built by a machine.

That's what I think intelligence is.